|

Using the orderBy and limit functions, we added a random column, sorted the DataFrame, and selected the top row. In conclusion, we explored different approaches to retrieve a random row from a PySpark DataFrame. Approach 3 involvesĬonverting the DataFrame to an RDD and using the takeSample function to retrieve a The sample function to sample a fraction of the DataFrame's rows. Approach 1 uses the orderBy and limit functions to add a randomĬolumn, sort the DataFrame by that column, and select the top row. The above examples illustrate different approaches to retrieving a random row from a # Approach 3: Converting DataFrame to RDD and sampling

Random_row_2 = df.sample(withReplacement=False, fraction=0.1, seed=42) # Approach 2: Using the `sample` function

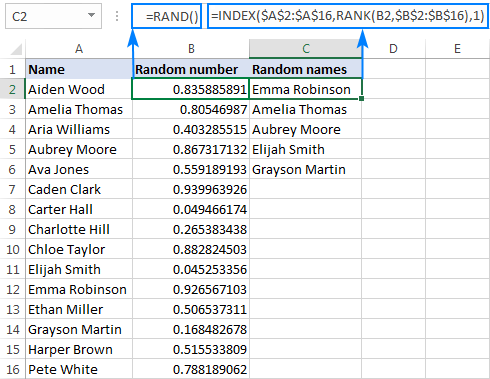

# Approach 1: Using the `orderBy` and `limit` functionsĭf_with_random = df.withColumn("random", rand()) Spark = ()ĭata = ĭf = spark.createDataFrame(data, ) Depending on your specific requirements and the characteristics of your dataset, you can choose the approach that best suits your needs.īelow is the program example that shows all the approaches − Example from pyspark.sql import SparkSession These approaches provide different methods to extract a random row from a PySpark DataFrame. This approach allows you to directly sample from the RDD, effectively retrieving a random row from the original DataFrame. Then, apply the takeSample function on the RDD to randomly select a row. To take a random row from a PySpark DataFrame by converting it to an RDD, use the rdd property of the DataFrame to obtain the RDD representation. We can convert the DataFrame to an RDD and then use the takeSample function to retrieve a random row. PySpark DataFrames can be converted to RDDs (Resilient Distributed Datasets) for more flexibility. Approach 3: Converting DataFrame to RDD and sampling This approach randomly selects a subset of rows from the DataFrame, allowing you to obtain a random row. Specify a fraction of rows to sample, set withReplacement to False for unique rows, and provide a seed for reproducibility. To take a random row from a PySpark DataFrame using sampling, utilize the sample function. By specifying a fraction and setting withReplacement to False, we can sample a single random row. Approach 2: Sampling using the sample functionĪnother approach is to use the sample function to randomly sample rows from the PySpark DataFrame. This approach shuffles the rows randomly, allowing you to obtain a random row from the DataFrame. To take a random row from a PySpark DataFrame using orderBy and limit, add a random column using rand(), order the DataFrame by the random column, and then use limit(1) to select the top row.

We can add a random column to the DataFrame using the rand function, then order the DataFrame by this column, and finally select the top row using the limit function. One approach to selecting a random row from a PySpark DataFrame involves using the orderBy and limit functions. How to take a random row from a PySpark DataFrame?īelow are the Approaches or the methods using which we can take a random row from a PySpark Dataframe − Approach 1: Using the orderBy and limit functions In this article, we explore efficient techniques to tackle this task, discussing different approaches and providing code examples to help you effortlessly extract a random row from a PySpark DataFrame. However, the process of selecting a random row can be challenging due to the distributed nature of Spark. In PySpark, working with large datasets often requires extracting a random row from a DataFrame for various purposes such as sampling or testing.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed